Artificial Intelligence (AI) is no longer a futuristic concept; it is the primary engine for modern business and societal innovation. While the benefits are immense, AI introduces unique risks that traditional software governance cannot fully address. These range from algorithmic bias and privacy breaches to safety hazards and the erosion of information integrity.

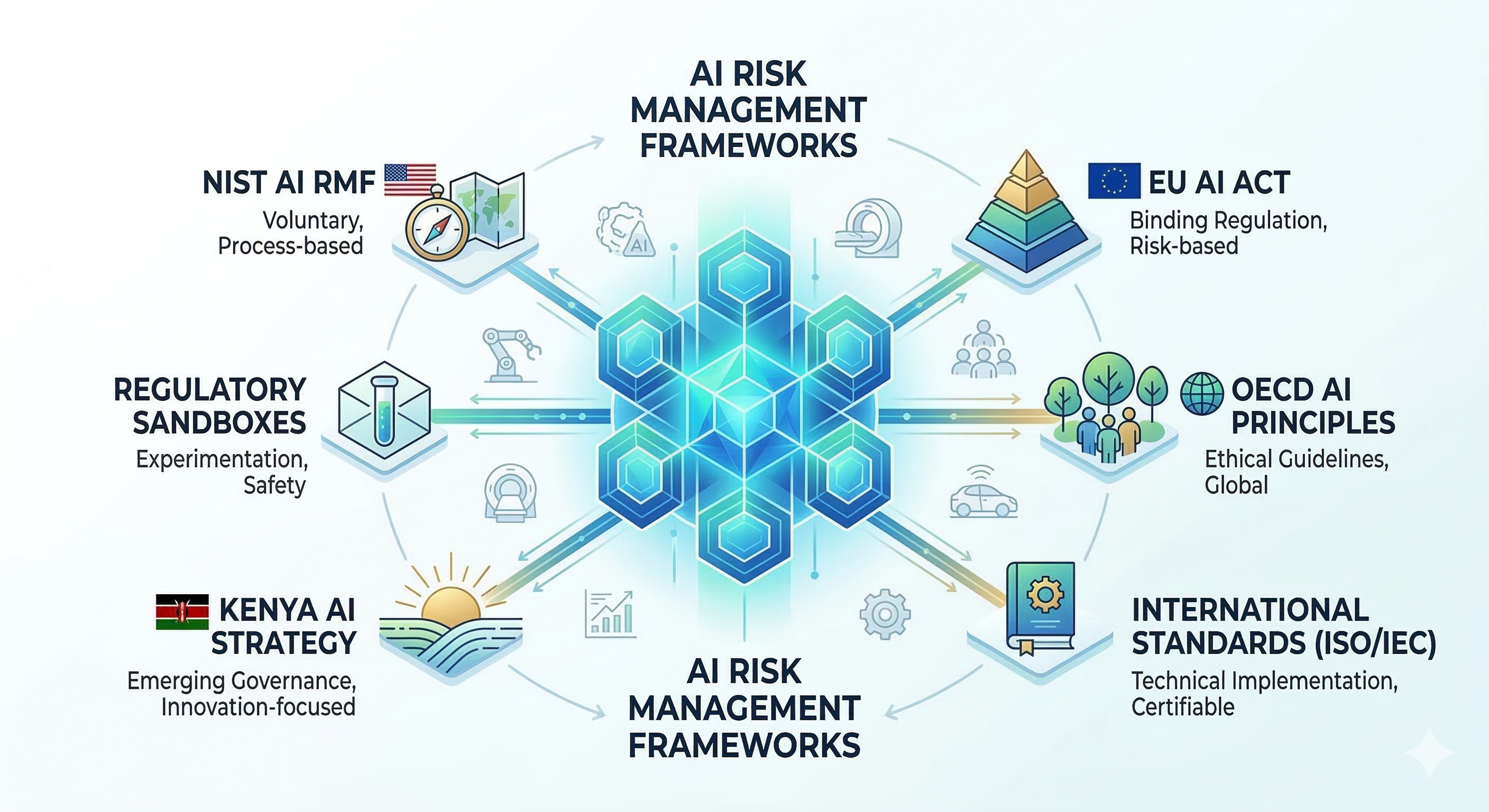

To navigate this, governments and international bodies have developed structured risk management frameworks. These frameworks help organizations identify, assess, and mitigate risks, ensuring that AI systems are not just capable but also trustworthy, secure, and fair.

1. NIST AI Risk Management Framework (AI RMF 1.0)

Published by the National Institute of Standards and Technology, the NIST AI RMF is the leading voluntary framework used globally, especially by organizations seeking a flexible, non-regulatory approach. It moves beyond technical security to address "socio-technical" risks, meaning it looks at how technology interacts with people and society.

The Four Core Functions

The framework is organized around four continuous functions that should be integrated into the AI lifecycle:

- Govern: This is the foundation. It involves creating a culture of risk management, defining clear roles, and ensuring leadership is accountable for AI outcomes.

- Map: Organizations must contextualize the AI. This means identifying the specific use case, the intended users, and the potential negative impacts on individuals or groups before the system is built.

- Measure: This function uses quantitative and qualitative tools to assess the risks identified in the Map phase. It tracks metrics for bias, accuracy, and reliability.

- Manage: Once risks are measured, organizations must act. This involves prioritizing which risks to treat, mitigate, or even deciding to decommission a system if the risks are too high.

Characteristics of Trustworthy AI

NIST defines seven key attributes that every AI system should strive for:

- Valid and Reliable: The system does what it claims to do consistently.

- Safe: It does not cause physical or psychological harm.

- Secure and Resilient: It can withstand attacks and recover from failures.

- Accountable and Transparent: Decisions can be traced back to their source.

- Explainable and Interpretable: Users can understand how the AI reached a specific conclusion.

- Privacy-Enhanced: Data usage follows strict privacy-preserving protocols.

- Fair (Bias Managed): The system does not produce discriminatory outcomes.

2. The EU AI Act: The World’s First Comprehensive AI Law

Unlike the voluntary NIST framework, the EU AI Act is a binding regulation with extra-territorial reach. If your AI system is used in the EU or affects EU residents, you must comply.

The Risk-Based Hierarchy

The Act categorizes AI systems into four tiers, each with different requirements:

| Risk Level | Definition | Examples | Obligations |

|---|---|---|---|

| Unacceptable | Systems that pose a clear threat to safety or rights. | Social scoring, manipulative AI, real-time biometric ID in public. | Banned: Must be removed from the market. |

| High-Risk | Systems used in critical infrastructure or essential services. | Recruitment tools, credit scoring, medical devices, law enforcement. | Strict Compliance: Logging, human oversight, and conformity assessments. |

| Limited Risk | Systems with specific transparency needs. | Chatbots, deepfakes, emotion recognition. | Transparency: Users must know they are interacting with an AI. |

| Minimal Risk | Applications with little to no risk. | Spam filters, AI-enabled video games. | Voluntary: No mandatory requirements, but codes of conduct are encouraged. |

Key Deadline: As of early 2026, most provisions for high-risk systems are nearing their enforcement date of August 2, 2026. Organizations are currently in the final stages of performing mandatory conformity assessments.

3. International Standards (ISO/IEC) and OECD Principles

While NIST provides a framework and the EU provides law, international standards provide the "how-to" for technical implementation.

ISO/IEC 42001: The AI Management System (AIMS)

This is the gold standard for organizational AI governance. It mirrors the familiar ISO 27001 (Information Security) but is tailored for AI. It requires companies to document their AI policies, perform impact assessments, and maintain continuous monitoring. It is often the primary way companies prove compliance with the EU AI Act.

OECD AI Principles (2024 Update)

The OECD principles serve as the ethical foundation for over 40 countries. In May 2024, these were updated to address the explosion of Generative AI. The update emphasizes:

- Information Integrity: Tackling AI-generated misinformation and deepfakes.

- Environmental Sustainability: Managing the massive energy and water consumption of large-scale AI models.

- Human Agency: Ensuring humans can always override or "kill-switch" a system that behaves unexpectedly.

4. Kenya: Emerging AI Governance and Industry Implementation

Kenya has positioned itself as a leader in the African digital landscape with the launch of the National AI Strategy 2025–2030 in March 2025.

The Kenyan Approach

Kenya is currently following a "soft-law" approach, prioritizing innovation while building the regulatory foundation. The strategy focuses on three main pillars:

- Infrastructure: Developing local cloud computing and high-performance computing (HPC) power.

- Data Governance: Utilizing the Data Protection Act (2019) to ensure AI training data is handled ethically.

- Strategic Sectors: Prioritizing AI deployment in agriculture (crop monitoring), healthcare (diagnostic support), and fintech.

The Kenyan government is currently using "regulatory sandboxes," which allow companies to test AI solutions under limited oversight. This helps policymakers understand the risks before drafting the formal Kenya AI Bill, which is expected to move toward Parliament later this year.

5. Challenges and Strategic Recommendations

Even with these frameworks, leaders face significant implementation hurdles.

Key Challenges

- The Compliance Gap: Many organizations have "AI ethics" on paper but lack the technical tools to measure bias or explainability in real-time.

- Dynamic Risk: Unlike static software, AI learns and changes. A system that is safe on Monday might become biased by Friday due to "data drift."

- Global Fragmentation: A company operating in Nairobi, Brussels, and New York must juggle three different sets of rules and standards.

Recommendations for Leaders

- Establish an AI Inventory: You cannot manage what you do not know. Map every AI tool currently in use, including "Shadow AI" brought in by employees via browser extensions.

- Adopt ISO/IEC 42001 Early: Even if not required by law, this standard provides the most robust structure for future-proofing your operations against any upcoming regulation.

- Cross-Functional Oversight: AI risk is not just an IT problem. Create a committee that includes legal, HR, and data science teams to assess impacts holistically.

- Prioritize Human-in-the-loop: For any high-impact decision, such as hiring or lending, ensure a human expert has the final say and the power to override the AI.