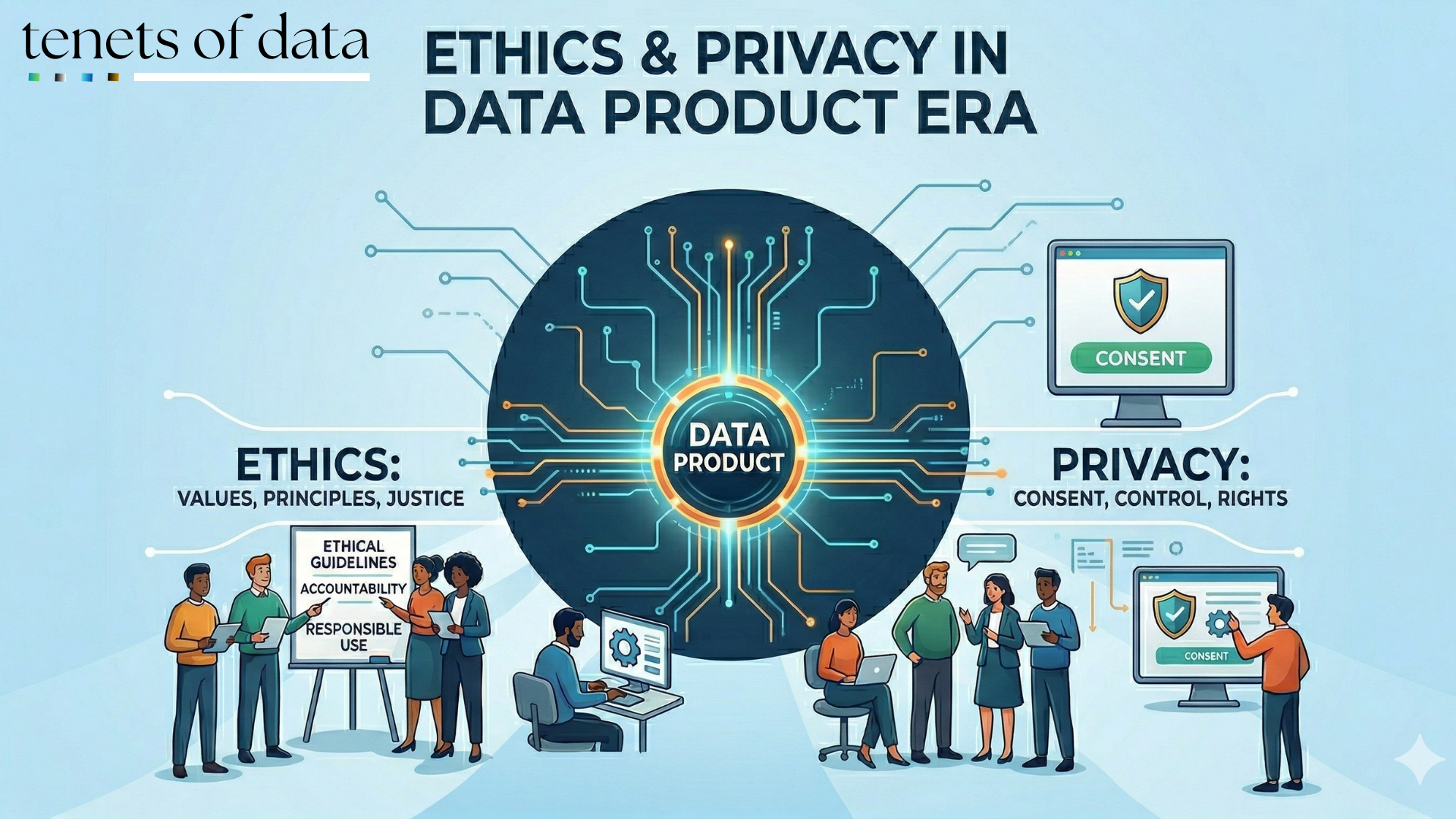

Introduction

Imagine for a moment our favorite analogy: the Smart Parcel (A Deep Dive into the Anatomy of a Data Product). We’ve discussed the "wrapper", the shipping label that tells you where the data came from, and the tracking number that ensures it’s fresh. But now, we need to talk about what happens when the contents of that parcel are so sensitive that their mere existence requires a digital lock that only opens for the right recipient. In the modern data landscape, we are moving past the idea that data is just a byproduct of business; it is a high-value, reusable asset, but with that value comes a massive ethical weight.

Treating data as a product isn't just about speed or ROI, but also about human rights encoded into architecture. If we get this wrong, we don’t just have a data swamp, we have a data security crisis that can cause serious physical, emotional, or reputational harm to the people behind the numbers.

1. The Ethical Blind Spot - When Data Products Forget People

The biggest danger in the world of automated data products is the black box effect. We often crave the why behind a decision, but tools like AutoML (Automated Machine Learning) don't have a built-in conception of fairness. Without human constraints, an automated system only understands the patterns in the data, which may be riddled with historical biases related to race, gender, or socioeconomic status.

Ethical data products require a shift toward Explainable AI (XAI). This means capturing the rationale behind specific dataset changes in the product’s metadata. When a product owner can explain why a model reached a conclusion, they bridge the gap between technical efficiency and human accountability. Ethical insights must be an inherent part of the data versioning process, ensuring that as a product evolves, its commitment to responsible AI practices stays documented and transparent.

2. Privacy Engineering

If ethics is the "why," privacy engineering is the "how." It starts with the principle of Privacy-by-Design, where privacy measures are built into the very architecture of our information systems and data products.

One of the most powerful implementations of this is the Mini-Vault approach. Instead of one giant data lake (which acts as a "honey pot" for hackers), some organizations manage millions of high-performance Micro-Databases, one for each customer, each secured with its own unique encryption key. This isolated, resilient structure ensures that even if one record is compromised, there is zero risk of a mass data breach.

Beyond storage, we use technical muscle to protect privacy during analysis:

- Data Minimization: We must design collection processes to ensure we only gather the minimum personal information necessary for the product’s purpose. Some laws and data protection regulations, such as those in Kenya and the GDPR, make this a core principle.

- Consistent De-identification: When we anonymize data, the same input must always map to the same output across all locations in our mesh. This allows teams to safely use an identifier in a join without ever knowing the person's real name.

- Differential Privacy: This adds "noise" to the data to temper patterns, ensuring that outliers (the "one-in-a-million" data points) can’t be used to re-identify individuals when combined with other resources.

3. Governance as a Sidecar, Not a Policeman

In a traditional organization, security is often a policing function that slows everything down. In a modern Data Mesh, we use the Sidecar Pattern.

Think of the sidecar as a companion process that runs alongside every data product. While the domain team focuses on building a useful product, the sidecar handles the automated governance: it checks access rights, enforces privacy policies, and captures audit logs without the developer having to write that code from scratch. This is Policy-as-Code, i.e., moving governance from static documents to executable guards that block unauthorized queries in real time before they even run.

4. The Lifecycle: Knowing When to Let Go

Ethical data management doesn't end when a product is published; it includes Data Lifecycle Management (DLM) and the eventual disposal of data its eventual disposal.

Under regulations like GDPR, individuals have a "right to be forgotten". Ethical products must have clear deprecation and deletion procedures. Deletion isn't just clicking a button; it requires secure methods of cryptographic erasure to ensure that sensitive information is truly gone.

Conclusion

At the end of the day, data is more than just "the new oil"; it’s the backbone of modern life. When we implement granular observability and privacy engineering at every product node, we are not just avoiding fines; we are building a system of trust.

Organizations that move data like unlabelled boxes in the dark will eventually act surprised when those deliveries fail. But those who embrace the anatomy of a truly self-describing, secure data product turn their data into an infrastructure that supports not just better decisions, but a more equitable and trustworthy future.