Introduction

With the rise of automation in Data Management, as of 2026, the traditional discipline of data stewardship is reaching an event horizon. AI-enabled Data Management Platforms (DMPs) have successfully subsumed the high-volume, repetitive tasks that once occupied 70-80% of a steward’s time. However, this automation has exposed a deeper crisis of Context Poverty. While machines can ensure a column is clean, they cannot autonomously interpret the business logic, tribal knowledge, or situational nuance required for autonomous systems to reason correctly.

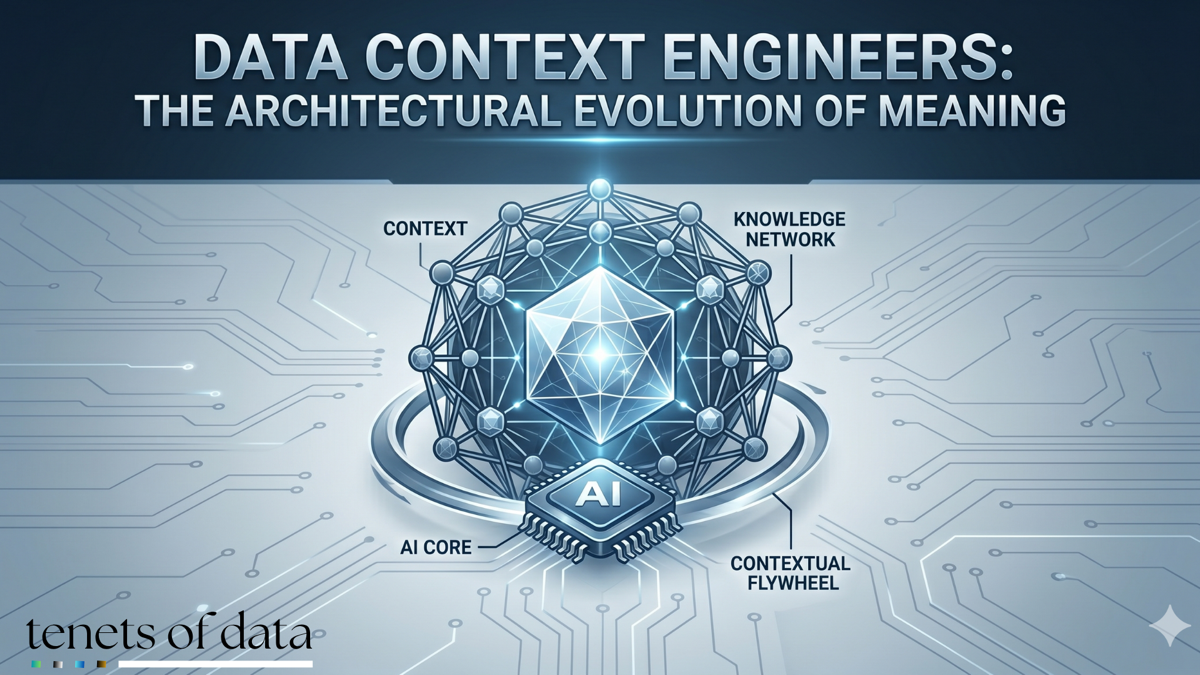

The solution is not to phase out the expert, but to evolve the role into the Data Context Engineer (DCE). This evolved professional moves from being a custodian of records to an architect of meaning, focusing on building the semantic alignment layer that sits between raw data and organizational intelligence.

The Automation Framework

Modern platforms now treat routine data management as an autonomous background service. AI "agents for governance" continuously monitor, tag, and enforce policies without human intervention.

Automated Functions and the Tools Powering Them

| Stewardship Function | AI-Driven Automation Mechanism | Leading Tools (2026) |

|---|---|---|

| Metadata Management | Auto-generation of descriptions; active metadata tags that update based on live usage patterns. | Collibra AI, Atlan, Informatica (CLAIRE) |

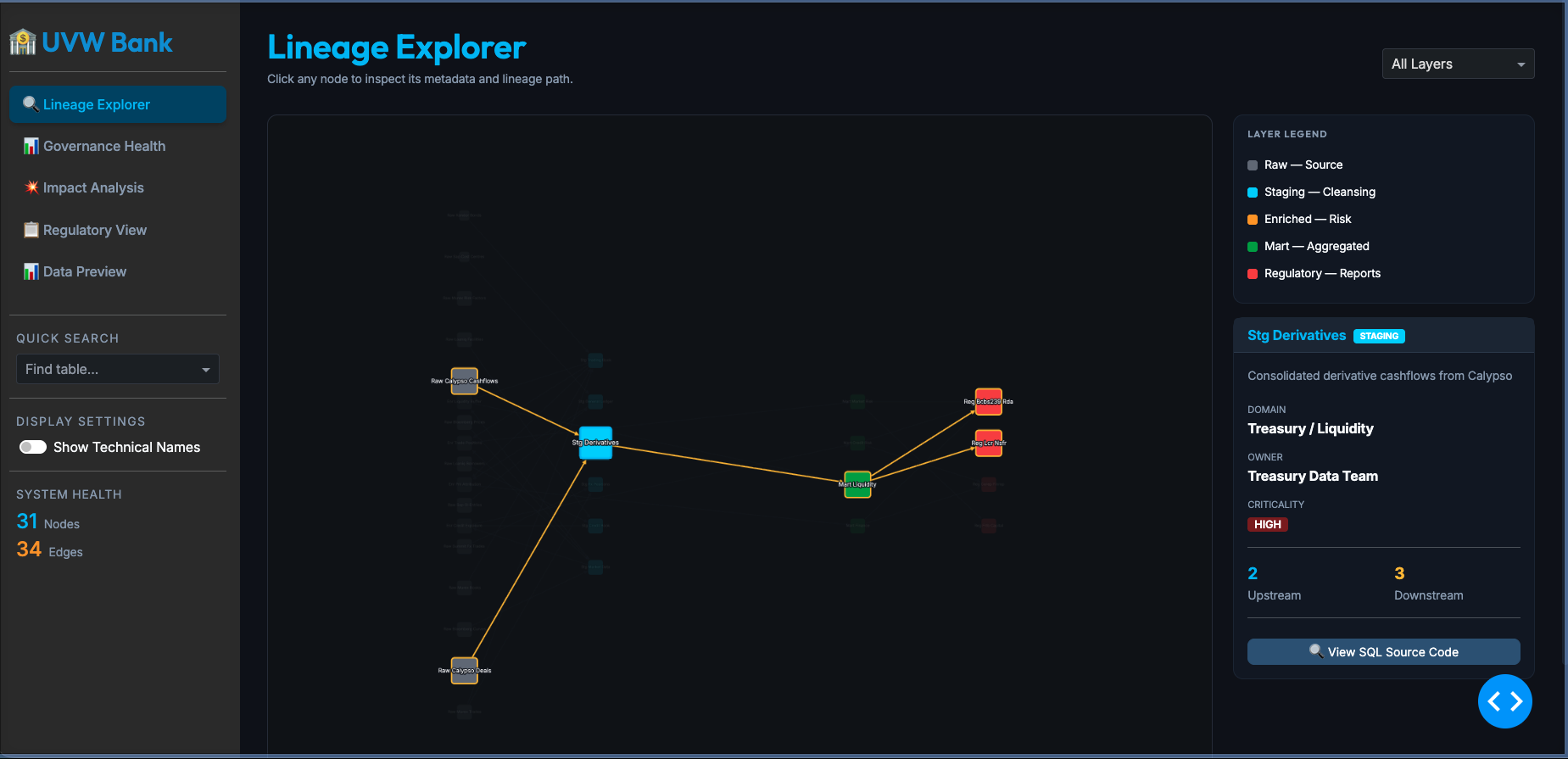

| Lineage Tracking | Automated SQL query log analysis; proactive impact analysis for schema changes in real-time. | Alation, Microsoft Purview, Euno |

| Lifecycle & Retention | AI-driven ROT (Redundant, Obsolete, Trivial) analysis; automated disposal per GDPR/HIPAA mandates. | OneTrust, Concentric AI, Gimmal, Egnyte |

| Data Quality (DQ) | Self-healing pipelines; autonomous detection of drift, null spikes, and schema evolution. | Monte Carlo, Anomalo, Soda, OvalEdge |

| Normalization | LLM-based labeling to map disparate values (e.g., mapping "Onc." to "Oncology") across systems. | Improvado, Semarchy, eZintegrations |

Modern governance platforms have transformed routine data management into an autonomous background service. In this landscape, AI agents for governance continuously monitor, tag, and enforce policies with little to no human intervention. Metadata management, once a manual cataloging exercise, is now driven by tools like Collibra AI and Atlan, which generate descriptions and active tags that update based on live usage patterns.

Lineage tracking and data quality have followed a similar path. Platforms like Microsoft Purview and Alation now use automated SQL query log analysis to provide real-time impact analysis for schema changes. Meanwhile, tools like Monte Carlo and Anomalo have pioneered self-healing pipelines that autonomously detect drift, null spikes, and schema evolution. Even the complex task of normalization is being handled by Large Language Models (LLMs), which map disparate values across systems (such as linking "Onc." to "Oncology") with high precision. This automation has freed humans from the drudge work of data, but it has created a void in the interpretation of that data.

The Rise of the Data Context Engineer

Organizations that rush to eliminate stewards often find themselves trapped in the Context Gap. This is a state where systems possess the data but lack the logic to use it. A common example is found in global retail, where a customer might be defined in dozens of different ways across various departments. While automation can ensure the data field is filled, only a Data Context Engineer can ensure the system applies the correct definition when an AI agent is answering a specific financial or support query.

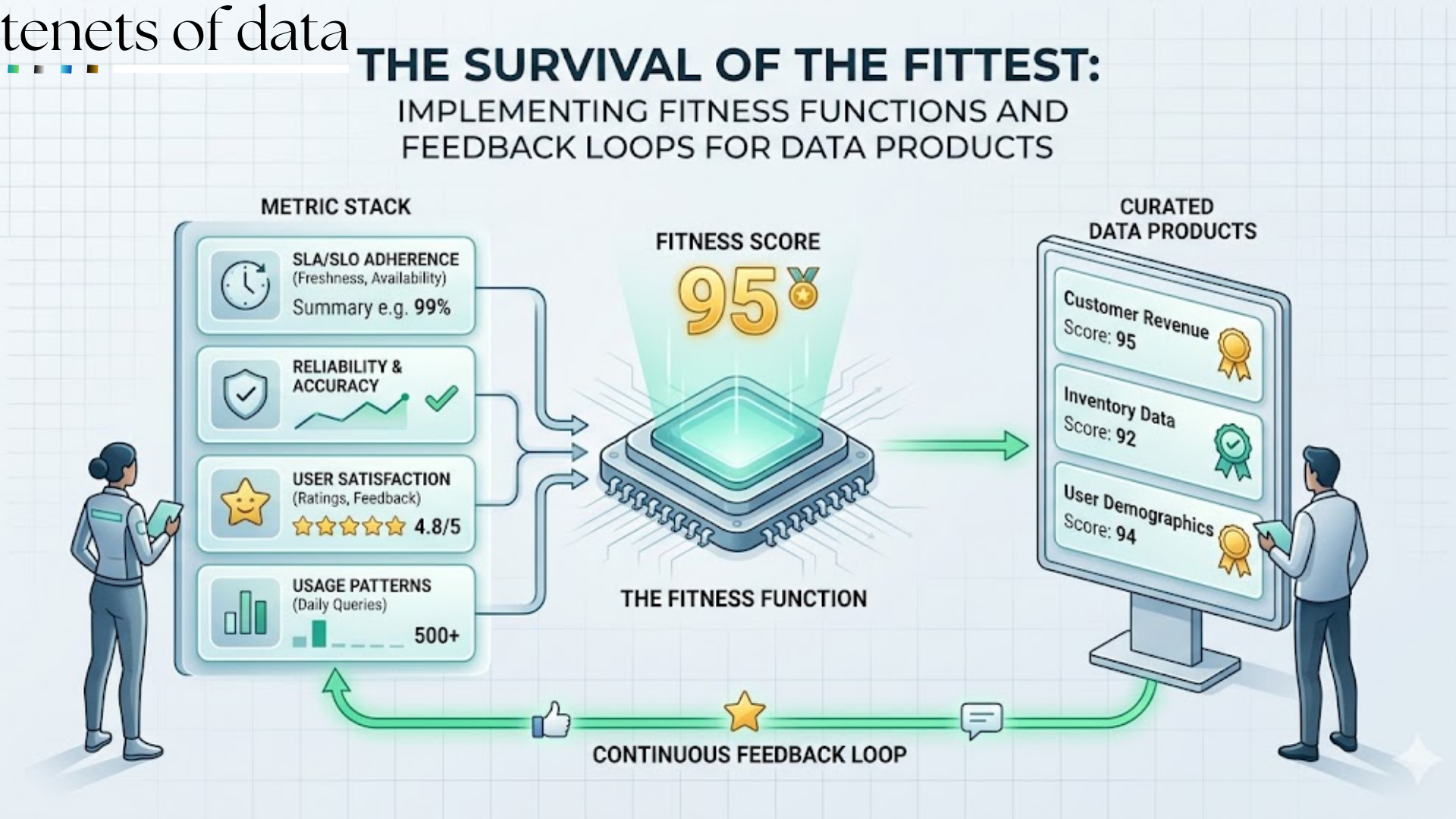

This transition requires a fundamental shift in responsibility. The legacy steward focused on data integrity, ensuring that rows were correct and compliant. The DCE focuses on inference readiness, ensuring the data is architected for machine reasoning. Their success is no longer measured by the percentage of nulls in a database, but by grounding accuracy (the reduction of AI hallucinations and the maintenance of semantic consistency).

The Functional Day-to-day of the DCE

The Data Context Engineer is a systems designer whose primary code consists of business logic and semantic relationships. Their work is centered on several key activities that move beyond documentation into architectural curation.

1. Semantic Alignment and Knowledge Graphs

Rather than simply documenting tables in a static wiki, the DCE builds Knowledge Graphs using tools like Neo4j. This allows them to map the complex relationships between business entities. Their goal is to identify "Ask Sarah" moments, those points in a workflow where unwritten tribal knowledge typically overrides official policy. By capturing these moments, the DCE moves AI from probabilistic guessing to deterministic semantic reasoning, providing a machine-readable map of how variables like "Revenue" and "Marketing Spend" actually interact.

2. Encoding Tribal Knowledge and Context Bug Triaging

DCEs specialize in Business-as-Code. They translate the unwritten rules of human experts into executable artifacts like JSON schemas or structured Markdown "skills" that AI agents can follow. When an AI returns an incorrect answer, the DCE does not simply adjust a prompt. They treat the failure as a context bug. They investigate the underlying data layer to see if a missing relationship or an outdated metric definition caused the error, fixing the problem at the source rather than the surface.

3. Inference Boundary Management

As AI automates the deletion of data per legal mandates, the DCE manages the context of its retention. They define Inference Boundary Maps, which are technical specifications that dictate what an AI is allowed to derive when it combines different data sources. For instance, a DCE ensures that an AI cannot accidentally infer a user's health status by combining their calendar data with cafeteria purchase records.

Organizational Impact and the Skill Matrix

The Data Context Engineer typically operates within the office of the Chief Data Officer but serves as a vital liaison to the Chief AI Officer. To succeed, these professionals must master a specific set of modern skills:

- Semantic Modeling: Proficiency in RDF, OWL, or Graph database modeling to create structured meaning.

- Protocol Fluency: Mastery of the Model Context Protocol (MCP) to effectively connect data context to autonomous agents.

- Code Literacy: The ability to use Git for version-controlling context artifacts, such as system instructions and logic rules.

- RAG Optimization: A deep understanding of chunking strategies to ensure AI systems process complete procedures rather than fragmented, useless snippets of information.

Conclusion

This evolution creates what is known as the contextual flywheel. High-quality context leads to superior AI decisions, which builds organizational trust and drives higher adoption. As adoption increases, the system generates more real-world feedback, allowing the DCE to further refine the context layer. In an era where routine data management has become a commodity, the ability to engineer the specific meaning of that data has become the most valuable strategic differentiator for the modern enterprise.