Introduction

The landscape of artificial intelligence is rapidly transitioning from a period of unfettered experimentation to one of rigorous accountability. As global regulatory frameworks mature, the primary challenge for organizations is no longer just defining ethical principles, but embedding them into the technical fabric of their operations. To survive this transition, leadership must move beyond static policy documents that exist in isolation. The following framework outlines a Shift-Left approach, where governance is not treated as a bureaucratic obstacle but as an essential architectural pillar woven into the MLOps pipeline from inception to sunset.

Strategic Design and Privacy Frameworks

The foundation of a governed AI system is established well before the first line of code is written. By prioritizing Impact Assessments and Privacy by Design, organizations can identify specific contexts of use and potential harms early. For high-risk systems, engineers must conduct Fundamental Rights Impact Assessments (FRIA) to mitigate risks to health, safety, and civil liberties. These reviews identify affected demographics and specific harm vectors, which then directly inform the technical architecture. Simultaneously, Data Protection must be guaranteed through rigorous data minimization and encryption. Modern architectures should favor technologies that enable training without raw data transmission, ensuring that privacy remains an "intrinsic feature" rather than a secondary consideration.

Development Rigor and Technical Validation

During the development phase, the focus transitions to Test, Evaluation, Verification, and Validation (TEVV), which functions as a series of automated gates within the CI/CD pipeline. Rigorous data governance is required to detect and manage both computational and systemic biases, ensuring datasets are representative and outcomes are equitable. For high-risk applications, Model Explainability (XAI) is a mandatory design requirement, providing the transparency users need to understand the logic behind specific predictions. This technical clarity supports Human Oversight by equipping operators to explain outputs to those affected. Furthermore, models must undergo intensive adversarial testing, or red teaming, to identify vulnerabilities such as data poisoning and evasion, and to benchmark the system’s resilience before it reaches production.

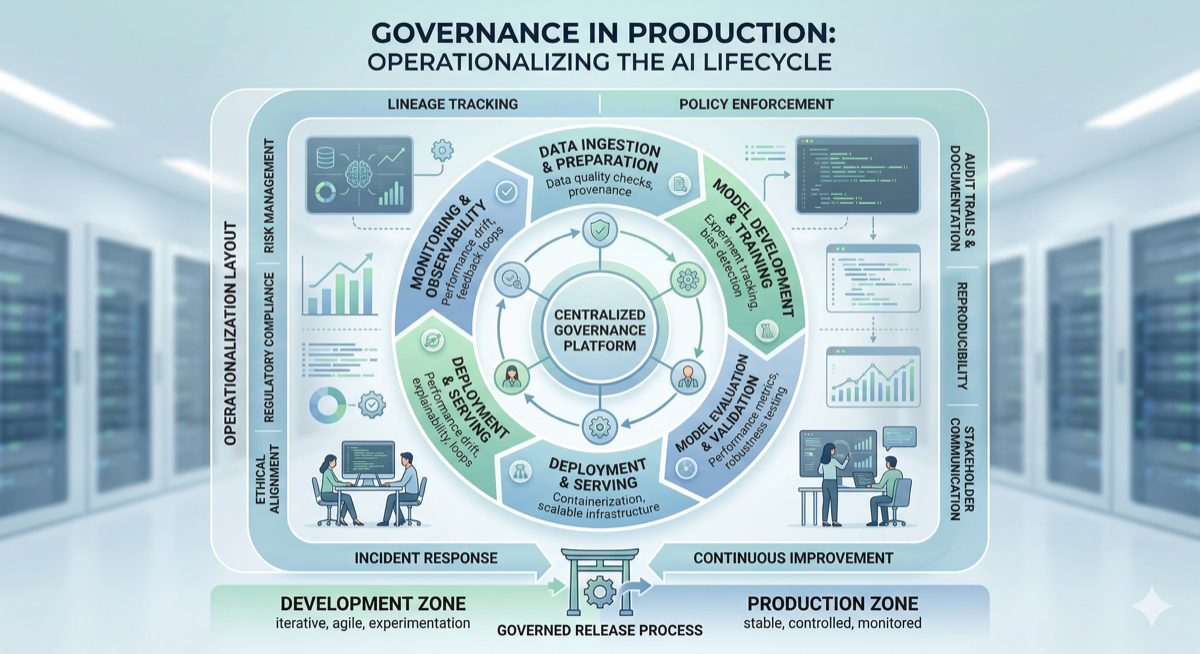

Deployment Traceability and Accountability

Governance during deployment ensures that every production model is traceable and documented. Each model must be accompanied by Model Cards and technical documentation that detail performance metrics, training data characteristics, and operational limits. This transparency is vital for regulatory clarity and internal accountability. Organizations should also implement comprehensive Lineage Tracking to monitor the origin of data, the transformations applied, and the specific model versions used to generate results. For high-risk systems, the automatic recording of event logs is required to maintain a high level of traceability over the entire operational lifespan.

Continuous Monitoring and Human Oversight

The governance process does not conclude at deployment; it requires a robust Post-Market Monitoring system to manage the evolving behavior of AI in real-world scenarios. Drift Detection is a primary requirement, as automated alerts must trigger retraining or manual intervention if a model’s performance degrades due to shifting data distributions. Systems must also be engineered for effective Human-in-the-Loop (HITL) oversight. This includes the provision of kill switches to halt operations and training to ensure human operators do not succumb to Automation Bias, which is the tendency to over-reliance on machine suggestions. Finally, any serious malfunctions or incidents that result in harm must be documented and reported to authorities immediately to facilitate corrective actions.

The Engineering Alignment

To make these requirements actionable, engineering teams must integrate governance checks directly into the Shift-Left Pipeline at four key stages:

- Commit Stage: Automated scans are conducted for Personally Identifiable Information (PII) and fundamental data quality metrics.

- Test Stage: The system executes automated bias evaluations and adversarial robustness checks against model artifacts.

- Deploy Stage: The pipeline automatically generates model cards and updates the central AI inventory.

- Operate Stage: Continuous monitoring of real-time telemetry is maintained to identify "Drift" and "Anomaly Detection" instantly.

Conclusion

Ultimately, the Shift-Left approach to AI governance transforms a complex regulatory obligation into a standardized engineering practice. By embedding safety and fairness into the MLOps lifecycle, organizations build systems that are not only compliant but also inherently resilient. This strategy ensures that as AI capabilities scale, the mechanisms of human accountability and technical integrity scale alongside them. Moving forward, the goal is to foster a culture of responsible innovation where governance is recognized as a competitive advantage rather than a burden, ensuring that technology remains a safe and reliable asset for society.